Difference between revisions of "Thread:Talk:BeepBoop/Understanding BeepBoop/Property of gradient of cross-entropy loss with kernel density estimation"

m |

m |

||

| Line 13: | Line 13: | ||

But things start to get interesting when K is different. | But things start to get interesting when K is different. | ||

| − | The scale of K(x - x_i) doesn't matter at all (e.g. when all data points are far from target distribution, or near). The effective learning rate is same when two data points are equally near the target distribution, or when they are equally far from the target distribution. | + | The common scale of K(x - x_i) doesn't matter at all (e.g. when all data points are far from target distribution, or near). The effective learning rate is same when two data points are equally near the target distribution, or when they are equally far from the target distribution. |

This behavior disobeys the intuition that when data points are far from target distribution, it should not learn as much as when they are near. | This behavior disobeys the intuition that when data points are far from target distribution, it should not learn as much as when they are near. | ||

Actually when K(x - x_i) takes the form of e^(-|x - x_i|), which is laplace distribution, there is one more interesting property: The distance between the center of target uniform distribution and the closest data point to it can be subtracted from all the data points, without affecting the gradient. Imagine that all the data points are moving to the center of the target distribution at the same speed, and stops as soon as the first data point aligns. | Actually when K(x - x_i) takes the form of e^(-|x - x_i|), which is laplace distribution, there is one more interesting property: The distance between the center of target uniform distribution and the closest data point to it can be subtracted from all the data points, without affecting the gradient. Imagine that all the data points are moving to the center of the target distribution at the same speed, and stops as soon as the first data point aligns. | ||

Latest revision as of 11:23, 5 February 2022

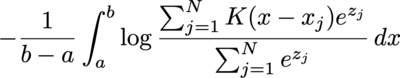

I'm quite curious about the behavior of the cross entropy loss between a target uniform distribution and kernel density estimation with softmax weight:

where a and b is the lower and upper bound of the target uniform distribution, K is the kernel function (assume normalized), x_j is the angle of the data point, and z_j the logit (weight before softmax).

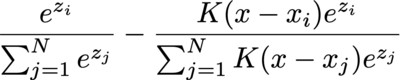

The integral is often calculated by numerical methods, such as binning, so let's consider the gradient of the logit of the i-th data point, and consider only one of the bins (with angle x), ignoring the values multiplied before integral (1 / (b - a)):

It degenerates to ordinary cross entropy loss (the multi-class classification case) with softmax when K is either 1 or 0 (and 1 iif the "label" matches): S_i - 1 when label matches or S_i when label mismatches. (S_i denotes softmax weight of data point i)

But things start to get interesting when K is different.

The common scale of K(x - x_i) doesn't matter at all (e.g. when all data points are far from target distribution, or near). The effective learning rate is same when two data points are equally near the target distribution, or when they are equally far from the target distribution.

This behavior disobeys the intuition that when data points are far from target distribution, it should not learn as much as when they are near.

Actually when K(x - x_i) takes the form of e^(-|x - x_i|), which is laplace distribution, there is one more interesting property: The distance between the center of target uniform distribution and the closest data point to it can be subtracted from all the data points, without affecting the gradient. Imagine that all the data points are moving to the center of the target distribution at the same speed, and stops as soon as the first data point aligns.