Talk:XanderCat

Congrats on breaking the 50% barrier. Seems that you have the planning of your bot on scheme, now it's just the translation into the right code. One small remark: You don't have to have 'zillions of versions' present in the rumble, the details of older versions still are available when not in the participants list anymore. Comparisons between two versions are quite easy to do like [1] . Just click on your bot in the rankings, then the details and a few older versions are shown. Good luck with your further development! --GrubbmGait 08:37, 25 May 2011 (UTC)

- Thanks GrubbmGait, though I'm not sure how much praise I deserve for being officially average. :-P I'm trying out a slightly revised version today, version 2.1. No major component changes, but it modifies the bullet firing parameters, driving parameters, some segmentation parameters, and has improved gun selection. Skotty 20:43, 25 May 2011 (UTC)

Contents

- 1 Version 3.1

- 2 Version 3.2

- 3 Version 3.6

- 4 PBI

- 5 From Robin

- 6 Rethink / XanderCat 4.8+

- 7 Case Analysis

- 8 Which Waves to Surf

- 9 Rolling Average

- 10 #1 Against PolishedRuby

- 11 End of the Road?

- 12 Fixing My Wave Surfing Rolling Depth

- 13 Debugging Request for Rednaxela or GrubbmGait

- 14 Might have to get back into things

- 15 Version 6.x Scores All Over The Map

- 16 New Theory on Performance Issues

- 17 Debugging XanderCat -- What Next?

- 18 Empty Output Files

- 19 New Factor Array Idea

- 20 Next Plans -- XanderCat 9.x -- September, 2011

- 21 New Segmenter

Version 3.1

Interesting results for version 3.1. No real change in rank from 3.0, but using an entirely new drive. I've done away with the borrowed wave surfing drive from BasicGFSurfer and replaced it with a drive of my own design.

Despite the new wave surfing drive (which I will call a Stat Drive) which I crafted almost entirely from scratch, I think it shares a lot in common with other wave surfing drives. It's just naturally where you end up when working out the best way to drive. I really haven't tried to tweak the segmentation yet, so I think it can be further improved with a few parameter changes.

Here is what my new drive does (you will likely see a lot in common with other drive strategies out there):

- Segmentation - Much like other wave surfing drives, my Stat Drive supports segmentation. I can't remember what it is actually segmenting on right now (don't have the code in front of me at the moment). I'll add that detail later. It is very easy to change the segmentation parameters. The Stat Drive relies on a seperate component to determine the segment or combination of segments, and the segmenters can be swapped in and out easily.

- Tracking danger - Each segment has a fixed number of "buckets" or "bins" that represent the danger at a particular "factor", where a factor represents an angular offset of the robot from an original bearing of the bullet wave origin to the robot at the time the bullet was fired. When a particular factor is determined to be more dangerous, a value is added to the corresponding bin or bins around that factor. Initially when I wanted to add danger, I just added to one bin. One thing I did steal from from the BasicGFSurfer wave surfing drive was the manner of adding danger to all the bins, trailing off sharply from the most dangerous bin. I don't know why I didn't think to do this initially. Once my eyes glanced over it, it was obvious it was what I should have been doing from the start.

- Bullet Hits - The most dangerous of events -- actually getting hit by a bullet. When hit by a bullet, the Stat Drive records danger of a certain amount (lets say a value represented by the variable d) to the matching bin of corresponding segment. One tenth that amount (d/10) is currently added to all other segments, though this is just experimental; I may modify or remove that effect as I tune it a bit more.

- Wave Hits - When a wave hits (but not necessarily a bullet hit), the Stat Drive currently records one fifth the amount of danger (d/5) for the matching bin of the corresponding segment. Thea idea being that the opponents gun, likely being a "Guess Factor" gun, might be more likely to aim for that bin next time, so lets try to avoid it. This also probably needs some fine tuning. Part of the same experiment as with bullet hits, adding one tenth of that amount (d/50) is added to all other segments.

- Wall Avoidance - While I wrote it from scratch myself, wall avoidance right now is doing pretty much the exact same thing as the "wall stick" approach. I have some ideas that would be fancier, but the "wall stick" approach works for now.

- Rolling danger - Rolling danger is the idea of removing danger previously added from bullets or waves that are over a certain age. The Stat Drive is not doing this right now, but I'm planning on experimenting with the idea.

- Figuring out where it can get to - One of the first steps to avoiding a bullet wave is determining how far you can go in each orbital direction before the bullet hits. At first I used some crude approaches to this with the Stat Drive, but they just won't cut it if I want to be really competitive. I now have it predict our position into the future, taking pretty much everything into account (turn rates, acceleration/deceleration rates, wall smoothing, etc) to make the prediction as accurate as possible.

- Figuring out where to go - once we know how far we can go, we have to decide where in that range we want to go. I'm experimenting with a few approaches. For now, it looks for the bin with the lowest value and heads there.

- Figuring out how to get there - Once we know where we want to go, we have to figure out how to get there. This seemed simple enough, but one problem I ran into was overshooting the target and being in the wrong place when the bullet arrives. This problem turned out to be significant in my testing. So I had to do additional work to ensure that if I will reach my target before the wave hits, I slow down before getting there so I land right on target. This sounds easier than it actually was to implement.

Where to go from here?

Performance was roughly equal in the rumble to the BasicGFSurfer drive. I need to tweak the segmentation approaches and parameters. I need to tweak the manner of adding danger. And I need to play around with rolling danger to see what effect that has. Once that is done, I don't believe I will make any more changes to the Stat Drive or it's use in XanderCat.

I may employ other drives in combination at some point using a "drive selector", an ability that is built into the Xander framework. For example, I would like to build a drive and gun built specifically for "mirror" bots that mirror their opponents drive; the drive selector would switch to these components whenever a mirror bot is detected.

Outside of drives, my Stat Gun is still a bit crude. I know I can improve there. And then I also have a few other little tricks up my sleeve I would like to try when I have the time.

It's neat to see you taking such a systematic approach with robocode! If I may a few suggestions:

- If I understand correctly, you add d/10 danger to every bin (except the one that hit you) rather than (in addition to?) adding bin-smoothed danger to each bin. I don't think this will help anything. The bin-smoothed danger should be enough.

- Logging hits from every wave, regardless of whether the other robot hit your robot, will give you a flatter movement; however, you may not actually want a flatter movement, even against GF guns. Before wave surfing was invented, I imagine that GF guns didn't roll their averages very much. Because of this, a flat movement will be worse against these guns. Rather than giving the enemy a flat movement to shoot at (which will make their targeting very close to random), you should move to the same GF repeatedly. When they hit you there, you know there is a peak in their stats, so you move somewhere else, and hopefully they keep shooting there for a while, allowing you to dodge bullets. Did I explain that well?

(I had thought of doing something like that but didn't for the reasons described above. It sounds rather similar to YersiniaPestis, but without the adaptive weighting of the flattener.) --AW 19:52, 6 June 2011 (UTC)

Nice job! Implementing Wave Surfing correctly can be a huge undertaking. My first suggestion would be to try just disabling the "every wave" logging of hits. You're right this should make you more unpredictable to learning guns. What most of us have found is that straight dodging from bullet hits actually works better against the vast majority of guns - only against the best guns does a "flattener" (what we call that mode) help. But more importantly, a flattener also destroys your scores against simple targeters.

I see you're getting 80% vs Barracuda and 88% vs HawkOnFire. Those could both be over 99% with no segmentation. My best advice would be to work on distancing, dive protection, and ironing out bugs until you can get that before trying to refine other aspects. Working on other stuff will only make it harder to fix the core stuff, and you may have to re-tune everything anyway once it's fixed. (Just turning off the flattener may go a long way!)

Good luck! --Voidious 20:37, 6 June 2011 (UTC)

- Thanks for all the suggestions. I've been intentionally trying to come up with a lot of the ideas and code myself, as that makes it more rewarding for me. On the down side, this has made it harder to learn all the terminology used by the Robocode community. Not until both of your comments did I know what a "flattener" was, though I had seen the term pop up here and there. I hadn't spent too much time thinking about what the effects of it would be against different opponents, but your comments give me food for thought. I actually have my system set up now to run different configurations of my robot against a test base of robots, so I am now at the point where I can see some real results rather than just trying to deal with the theoretical. I'll try turning off the flattening and see what happens. Skotty 22:18, 6 June 2011 (UTC)

- I assume by "distancing", you mean trying to keep my robot a reasonable distance away from the opponent. I was thinking maybe try to refine my drive path so that it will move slightly away from the waves if the wave origins are too close, and possibly favoring non-smoothing directions when near a wall and the calculated danger in each direction is similar. "Dive protection" as I understand it is not driving towards the enemy excessively; I don't see how this would happen except to a limited degree when wall smoothing, or on startup before bullets start flying (at the moment, when no bullet waves are in action, XanderCat will just move in a straight but wall-smoothed path, causing it to circle around the edges of the field; I should probably change this to make it move into a more desireable position before bullets start flying, rather than relying on chance). Skotty 17:46, 7 June 2011 (UTC)

Version 3.2

Had a bad night of tweaking where everything I did seemed to make my robot worse. I've turned off the flattening but I don't think I have a proper test bed of opponents to determine what kind of effect it might have. It did increase the score on Barracuda and HawkOnFire, but only to the about 90%. I did, however, finish some new anti-mirror components. PolishedRuby 1 is soooo dead. :-D I haven't tested against any other mirror bots yet, but my tests against PolishedRuby put a big smile on my face. As you might guess, when mirroring is detected, I have a drive and gun that work in tandem, where the drive plots a semi-random route in advance that the gun can process to fire on the mirror of the future positions. Awesome. My framework makes it easy to add it on to any existing gun combinations. It's not perfect, but it gets the job done, and might can be improved a bit more. With the mirror components active, my gun hit ratio on PolishedRuby jumped from something very sad up to a wicked 70%.

I've got my score on Barracuda up to the low 90s, and HawkOnFire up to about 98%. I've disabled the flattening, and I've implemented a new drive that takes command on the first few moments of a round to try and obtain better positioning before bullets start flying. Also, my StatDrive will now change it's angle a little to back away from waves when it deems itself too close to them. I also have the anti-mirroring operational. I was going to try and update my StatGun for this version (3.2), but I think I may hold off on that until version 3.3. I want to see what effects the changes so far have made.

Rumble Results

Fascinating results in the rumble. Despite winning fewer rounds than version 3.1, version 3.2 ranks about 30 places higher. Presumably by beating the simpler robots by quite a bit more than previously. Lots of variations in the PBI. I'll have to play around with some of the ones version 3.2 performed poorly against. I'll probably come up with a way to turn the flattening on and off automatically, which I think some of the other robots do. Only other thing I can think of to do for driving is to put some work into not running into the opponent robots, which is something I have ignored previously. AntiMirror components rocked.

Next version I will update the StatDrive. I know of a couple of ways it could be reasonably improved. And after that? Not sure...

My suggestion is that you need to up your score against Barracuda - there are still lots of points to be gained there. Until you are getting 99+% you are losing points due to not getting far enough away. If you watch your battles against Barracuda note every bullet hit and think about what your bot could have done differently to avoid it, be it reversing, being further away, not being against a wall, etc and then code something to get it to (not explicitly, but generally) avoid that situation next time. I'm not sure how your drive system works but in my bots I modify the desired angle towards the orbit centre proportional to the distance from the orbit centre, so as it gets farther away it moves towards the centre, and as it gets closer it moves away. Anyways, some food for thought. --Skilgannon 08:58, 8 June 2011 (UTC)

- Were you looking at version 3.2 or 3.3? Version 3.2 gets about 93% against Barracuda. Yeah, it could be better. Version 3.3 in the rumble only got 80% against Barracuda. I can't explain that. I've run 3.3 myself against Barracuda many times, and it always gets around 93%, same as version 3.2. Ignoring that anomaly, where is that other 7%? It may be from collisions. Right now, XanderCat ignores collisions with the opponent, and can get stuck rammed against them. Also, it may be from getting too close when wall smoothing. I had mentioned the possibility of moving away from the wall in cases where danger each direction is similar in order to not get jammed against the wall as much, but I haven't implemented that idea yet. Skotty 14:21, 8 June 2011 (UTC)

- I'd suggest hunting for bugs really. Even 98% against HawkOnFire, is low enough that I think that notable surfing bugs could be likely, (and having a large hidden influence against other bots). It should be possible to get to 99.9% or so if the surfing algorithm is reasonably precise and the bugs of implementation are worked out. --Rednaxela 14:48, 8 June 2011 (UTC)

- Version 3.4 moves up the ranks, but still gets 98% on HawkOnFire and 95% on Barracuda. I believe this is due to not having any dive protection when wall smoothing (I do employ distancing, if I understand correctly what this is -- attempting to back away some when in too close -- but this doesn't help if you are getting smoothed into a wall). I'm still working on a good solution for dive protection near walls. I tried a few things when developing version 3.4 but haven't come up with something that improves performance yet. Perhaps for the next version. -- Skotty 17:16, 9 June 2011 (UTC)

A quck note for you in relation to ucatcher. That bot is uses bullet shielding. Basically, the better you shoot at it, the better chance it has to deflect your shot. Not too hard to detect when it happens either, just check the bulletHitBullet event and see if it happens a bunch. If so, don't aim for the center of the bot, but an edge of it instead. --Miked0801 23:31, 10 June 2011 (UTC)

Version 3.6

Interesting results in the Rumble. Compare this version to version 3.4, and check out the PBI graph. It seems I inadvertently tuned it against top bots, but somehow lost a bunch of points on the middle of the pack. With a little more analysis and testing, I think I can finally jump up in the ranks again. I suppose there could still be some hidden bugs I need work out, too. The drives on 3.4 and 3.6 are the same but the gun on 3.6 is far more advanced. It should be notably higher in the ranks. Oh well. I guess I have to make version 3.7 before taking a break now. :-)

PBI

Okay, with version 3.8, I am frighteningly close to being able to tie or even beat some of the top and famous robots (note that I haven't been using flattening either), yet I am still way underperforming on the middle of the pack. Crazy! One problem being that apparently my small test bed of robots is not properly representative of the whole. I guess I need to go round up some problem bots for me and figure out what gives. Skotty 23:53, 12 June 2011 (UTC)

- One tip: when trying to improve against certain bots, make sure you run a lot of battles of your previous version against those bots, too. It takes hundreds of battles to get a real accurate result in any pairing, so the rumble result isn't really gospel. You can easily find yourself chasing phantoms (mistaking bad luck for underperforming). --Voidious 00:02, 13 June 2011 (UTC)

- A remark about version 4.0. Your wrapper against bullet shielders does have a very big offset. I use fixed 0.6 distance either way and my bullets are only occasionally intercepted. Your offset of 5 or 10 does influence your performance against the 'pack' to much. There are a lot of bots out there that give you a hard fight although ranked lower, as they do not squeeze every percent out of the minor bots. Compare your stats against a bot on a rank you wish to have, see your strengths and weaknesses compared to it and handle accordingly. --GrubbmGait 22:48, 15 June 2011 (UTC)

- The bullet shielding effect only engages if there are a set number of consecutive bullet-hit-bullets, so I think I'm okay on most of the pack. It would only happen by rare chance against a non-bullet-shielding bot (or so my theory goes). Skotty 23:43, 15 June 2011 (UTC)

From Robin

Holy Disco Batman, I'm stuck in the 70's!

,== HOT vs RamBots ==

He's no longer in the rumble, but there's a bot that might make you think twice about using HOT vs RamBots: MaxRisk. =) --Voidious 20:07, 21 June 2011 (UTC)

- Ramming messes with all my hit ratios, which was making the gun selection almost random against them, and occasionally XanderCat would miss a shot against a rammer follwing almost directly behind (probably due to a guess factor shot). This is why I added the extra decision to only use Head-On targeting against rammers (and note, the head-on targeting only takes effect when opponent is within very close range). I'll have to check out the bot you linked to see what it does. At very close range, I don't see how head-on could be a bad choice. At distance while they are closing, yes, head-on might not be best. But at distance, my regular gun selection is active. This makes me think, however, that I could be doing something better when the rammer is at distance, as my regular gun selection, as previously mentioned, is usually messed up by the wild hit ratios that occur when the opponent is constantly ramming.

- I haven't seen MaxRisk in battle for a while. But at the time he was released, Dookious was using HOT as part of his anti-ram mode and MaxRisk crushed him into little pieces. I think the issue is that MaxRisk uses prediction, so he's ramming the spot you're moving towards, not just heading straight at your current position. --Voidious 17:35, 22 June 2011 (UTC)

Rethink / XanderCat 4.8+

I lost some ranks when I refactored the guess factor and wave surfing code in version 4.7, and have yet to get them back. But I'm still convinced the refactor was a good thing.

I've ironed out all the major bugs, and if I watch XanderCat in some battles, I don't see it doing anything obviously wrong. This got me thinking about how I handle segmentation again. I think my philosophy on balancing segments for comparison was wrong in the drive, and am changing it in version 4.8. I also plan on excluding certain segment combinations that when I think about them, just don't make much sense (like using just opponent velocity). I think this should improve performance.

Beyond this, I'm not sure what I would do next to try to improve. I could run zillions of combinations of segments and parameters just to see what seems to work better against a large groups of robots that I think is representative of the whole. Not sure I will go to that extent though. Skotty 01:09, 22 June 2011 (UTC)

I'd definitely say that you still have non-negligible bugs / issues with your surfing. Looking at Barracuda and HawkOnFire again, compared to DrussGT we have 95.82 vs 99.83 and 97.91 vs 99.91. In other words, both are hitting you ~20x as much, totally unrelated to how you log/interpret stats (because they're HOT). Not to be a downer - XanderCat is coming along great and you appear to have a really robust code base. Or if you're burning out on 1v1, how about Melee? It's a much different animal. =) --Voidious 01:28, 22 June 2011 (UTC)

- It appears as though I'm on the right track with version 4.8. Just for you Voidious (grin), in addition to other changes, I configured it to maximize scores against head-on targeters, which raised the Barracuda and HawkOnFire scores to 98.98 and 98.76 respectively (2 battles each so far). To get the rest of the way to DrussGT levels, I will need to tweak my dive protection a little more; it still causes XanderCat to stall near a wall long enough to be hit every once and awhile. I may need to also tweak my "Ideal Position Drive" a bit more too, as it still drives too close to opponents occasionally when trying to reach an ideal position (the Ideal Position Drive drive runs at the start of each round before bullets start flying).

- Nice. =) For better or worse, the RoboRumble greatly rewards bots that can annihilate HOT and other simple targeters, so you might be surprised by how much of a ranking increase you can find by polishing that aspect of your surfing. It's not always the sexiest thing to work on, nor the most fun... But more importantly (to me), it's just a good way to verify that your surfing is working how it should. I can't find a good quote, but both Skilgannon and Axe have commented on the fact that if even a single HOT shot hits you, there's something wrong. --Voidious 17:51, 22 June 2011 (UTC)

- Very true. A wavesurfing bot should be able to dodge all *known* bullets perfectly, and HOT is only known bullets. Unless there is something funky like bullets fired from 20 pixels away, or a gun cooling time of 1 tick, all bullets *should* be avoidable.--Skilgannon 11:54, 23 June 2011 (UTC)

- Nice work, but just as a note, some might think me crazy, but I don't think *any* explicit dive protection is necessary for this sort of thing really. My surfing bots RougeDC and Midboss (same movement code), get 99.5% against HawkOnFire with no explicit dive protection whatsoever (and in certain past versions they did even better IIRC). The thing is, as I see it, dive protection is completely unnecessary if the surfing properly considers how movement changes botwidth. I much prefer it that way as it doesn't require tweaking/tuning to get right. Just my 2 cents on dive protection. --Rednaxela 20:50, 22 June 2011 (UTC)

- Well, I'd still call it "dive protection". =) But yes, I agree that multiplying danger by bot width (or dividing by distance, which I think is still what I do) is about the most elegant solution. And I doubt anyone's calling you crazy. Do any top bots since Phoenix use special cases? I guess I'm not sure about GresSuffurd or WaveSerpent. --Voidious 21:14, 22 June 2011 (UTC)

- Oops, I guess I'm out of touch. Diamond still has special cases, despite taking this approach - it scales the danger more than linearly beyond a certain threshold, as Dookious did. Maybe I'll test removing that, just for the sake of argument. =) I think it will lose points, though. Sure, for one bullet, the danger scales linearly with bot width. But that bot width affects future waves too. I suppose whether this is "explicit dive protection" would be up for debate. --Voidious 21:24, 22 June 2011 (UTC)

- Hmm...considering my own robot width when surfing...why didn't I think of that before? Guess what new feature will be in version 5.0? :-D Skotty 22:44, 22 June 2011 (UTC)

- Rather than multiplying danger by bot width, I prefer integrating over the affected bins, since many bins can be covered at close range... ;) --Rednaxela 22:49, 22 June 2011 (UTC)

- GresSuffurd has 2 lines of code handling both distancing and dive protection. This code hasn't been changed for years. The dive protection just handles the angle, not the danger. My latest effort was to use the summed danger of all covered bins instead of the danger of one bin to decide which direction to go(forward, stop, backward), but this approach let me fall out of the top-10 ;-) Sometimes a simple, proven, not optimal solution works better than a theoretical optimal solution. I do like the idea of letting danger instead of angle decide when to change direction though, and I will continue in this path with the next versions. Welcome to the dark caves of Robocode. --GrubbmGait 23:25, 22 June 2011 (UTC)

- If it's ever pitch black, watch out for GrubbmGait's pet Grue ;) --Rednaxela 00:21, 23 June 2011 (UTC)

- I think the reason these approximations often work better is that we're using a discrete system, and often the optimal assumes continuous. I think the other reason is that the optimal system often gets horrendously complex and bugs creep in, making the simple system actually more accurate. But these are just thoughts =) --Skilgannon 11:54, 23 June 2011 (UTC)

- Well, in this specific case, I would say the "optimal system" doesn't get more complex. I would argue integrating over botwidth is less complex, because:

- It also implicitly does the most important part of what many people use bin smoothing for

- There aren't really any parameters to need to tune

- To be clear, a very very very tiny amount of bin smoothing is still useful, to cause it to get as far as possible from danger, but the integrating over botwidth really does the important part of the smoothing. Actually, I suspect that if people get lower scores with integrating over bins, it's because it overlaps with their existing smoothing which has become far too strong.

- Basically, sometimes the "optimal system" may actually be less complex. It can reduce how many tunable parameters are needed, and also replace multiple system components necessary to fill a purpose. --Rednaxela 13:37, 23 June 2011 (UTC)

- Well, in this specific case, I would say the "optimal system" doesn't get more complex. I would argue integrating over botwidth is less complex, because:

- I also think we tune around a lot of arbitrary stuff in our bots. I remember PEZ and I often lamented how something we'd set intuitively, and "couldn't possibly be optimally tuned!", resisted all attempts to tune it. I imagine that's sometimes the case when an existing simple/approximate approach performs better than the "new hotness totally scientifically accurate" approach. Dark caves indeed. =) --Voidious 14:48, 23 June 2011 (UTC)

- For the record, I don't use binsmoothing, as I don't see the purpose of it. If a safe spot is near danger or far away from danger does not matter, it is still a safe spot. --GrubbmGait 19:16, 23 June 2011 (UTC)

- Oops, I guess I'm out of touch. Diamond still has special cases, despite taking this approach - it scales the danger more than linearly beyond a certain threshold, as Dookious did. Maybe I'll test removing that, just for the sake of argument. =) I think it will lose points, though. Sure, for one bullet, the danger scales linearly with bot width. But that bot width affects future waves too. I suppose whether this is "explicit dive protection" would be up for debate. --Voidious 21:24, 22 June 2011 (UTC)

Case Analysis

Just out of curiousity, does anyone have any insight as to why deo.FlowerBot 1.0 drives so predictably against gh.GresSuffurd? I can't figure it out. FlowerBot just drives around in a big circle when fighting GresSuffurd, while seeming far less predictable against XanderCat 4.8. Maybe it's a distance thing? Looking a little closer, I see that a lot of top robots are only getting about 70% against FlowerBot, so perhaps it's just a lucky tuning on the GresSuffurd matchup (or unlucky, in the case of FlowerBot).

I'm hunting around to find cases where XanderCat performs poorly in cases where top robots perform very well. So far I haven't found a case I can learn anything from. I'll keep looking...

Flowerbot has a bug. This bot is derived from the (original) BasicGFSurfer which had a flaw when bullets had a power of x.x5 It could not match the bullet to a wave due to a bug in the rounding, therefor it did not 'count' the hit as a hit in its surfing. Just try out and always fire 1.95 power bullets at it, you will obliterate it. There are still some more bots using this codebase, so this 'bug-exloiting' could gain some points for you. --GrubbmGait 19:25, 23 June 2011 (UTC)

- Yep. That's it. Skotty 19:35, 23 June 2011 (UTC)

Which Waves to Surf

Anyone tried surfing all enemy waves at the same time? XanderCat currently surfs the next wave to hit, but I've been thinking about trying to surf all enemy waves simultaneously. Not sure if it would be worth trying or not.

Yep, many modern bots do. I surf two waves and weight the dangers accordingly. Doing more or all waves (would be just a variable change) would have almost no effect on behavior, because 3rd wave would be weighted so low, but cost a lot of CPU. --Voidious 19:45, 23 June 2011 (UTC)

In addition to what Voidious says, I'd like to note that going beyond two waves without eating boatloads of CPU could perhaps be done if one tries to be creative. One option is doing something like two and a half waves. What I mean by "half" is for the third wave, taking an approximate measure such as "If waves 1 and 2 are reacted to in this way, what is the lowest danger left for wave 3 that is approximately possibly reachable?". That approach leaves the branching factor of the surfing equal to 2-wave, but allows the 3rd wave to break ties in a meaningful way. Now... I haven't actually tried this, but just a thought about how to go beyond 2 without eating too much cpu. It might help in cases where the reaction to the first two waves would normally leave it particularly trapped... --Rednaxela 21:16, 23 June 2011 (UTC)

- I'm trying out surfing 2 waves at once for version 5.0, but I'm not sure how well it will work. I'm currently weighting the danger of the closer wave at 80%, and the 2nd wave (if there is one) at 20%. This is more a gut feeling for now. I may have to change it later. On a related note, version 5.0 pays more attention to robot width, such as determining when enemy bullet waves hit and when they are fully passed, but I was torn as to when to stop surfing the closest wave. Do I continue to surf it until it is fully passed, or do I stop surfing it right when it hits to try to get an earlier start on the next wave? For now, I'm doing the latter. Skotty 13:15, 24 June 2011 (UTC)

- About weighting between the waves, I believe one popular approach is weighting by (WaveDamage+EnergyGainOpponantWouldGet)/(distanceWaveHasLeftToTravel/WaveSpeed). This approach is nice because it gives a reasonable weighting of waves in "ChaseBullet" scenarios.

- As far when to stop surfing a wave... what I personally do, is surf the wave until it has fully passed, BUT I reduce the danger to 0 for the exact range of angles that would have already hit me (this is all using Precise Intersection to determine what range of angles would hit for each tick). This means that a wave that has almost completely passed me, will still be getting surfed, but care about those few angles that could still possibly hit (meaning, very low weight often). --Rednaxela 16:47, 24 June 2011 (UTC)

- Yeah, (damage / time to impact) is good, maybe squared. I can't remember if XanderCat is reconsidering things each tick (ie, True Surfing) or not, where that formula makes sense. While Rednaxela's setup is by far the sexiest, in a less rocket sciencey system (such as Komarious), I definitely favor surfing the next wave sooner - like once the bullet's effective position* has passed my center. (*Dark caves note: in Robocode physics, a bullet will advance and check for collisions before a bot moves. So for surfing, I add an extra bulletVelocity in cases like this.) --Voidious 17:53, 24 June 2011 (UTC)

- Originally, XanderCat was reconsidering things each tick, but I was running into what I referred to as "flip flopper" problems, where XanderCat would keep changing it's mind, and it seemed to be hurting performance. So I switched it to only decide where to go when a new bullet wave enters or leaves the picture (plus it processes less that way). However, I could see reconsidering every tick as being superior with the kinks worked out, and the "go to" style surfing has problems with dive protection. I therefore just modified my drive again to make the frequency of surfing configurable -- a hybrid approach between "go to" surfing and true surfing -- where I can set the max time to elapse before a reconsideration is performed; if the waves in play haven't changed before the time limit elapses, a reconsideration is executed. This becomes true surfing when you drop the time limit to 1. Not sure what value I will use for 5.1+ yet. Skotty 19:59, 24 June 2011 (UTC)

Rolling Average

I notice you say you are using a very high rolling average in both movement and gun. I have found in DrussGT that the gun should have a very high rolling average, but the movement a very low one to deal with bots that have adaptive targeting. By low I mean less than 5 on very coarsly segmented buffers, and less than 1 on finely segmented buffers. But I suggest you experiment with your own data and figure out what works best for you =) --Skilgannon 10:35, 27 June 2011 (UTC)

#1 Against PolishedRuby

I just checked for fun, and found that XanderCat currently holds the #1 score against PolishedRuby! Only 2 battles in the rumble, and only best by a slim margin, so XanderCat could slip down. But for the moment, I would like to claim my virtual gold medal against mirror bots. :-D —Preceding unsigned comment added by Skotty (talk • contribs)

Well, seems you certainly have a good anti-mirror system in place. I've never gotten around to building one of those... --Rednaxela 06:18, 1 July 2011 (UTC)

End of the Road?

Well...I'm now at a loss as to how to further improve. I have a couple of ideas for gaining a few points here and there, but nothing that would boost me by much. I've played around with a few changes against a small test bed of robots that top bots consistently score about 15 to 20 percent higher than me against, but so far I haven't found a way to improve my score any against them. Ho hum...yeah...no clue. Maybe some more ideas will come to me, but for now, looks like #49 in the rumble is the end of the road for my rank climbing days. Not without picking apart open source top bots, anyways. -- Skotty 02:58, 1 July 2011 (UTC)

- In case anyone cares to comment, I collected a list of problem robots that other top robots consistently score significantly higher than XanderCat on. Maybe there is a connection between some? Hmm...maybe I should look into "flattening" again. Right now I do not use flattening. That could be... if I can just figure out under what circumstances to use it...

- rz.Apollon

- nat.BlackHole

- ary.Help

- Bemo.Sweet30

- kawigi.mini.Coriantumr

- ary.mini.Nimi

- tad.Dalek98

- apv.TheBrainPi

- pez.gloom.GloomyDark

- cx.BlestPain

- tide.pear.Pear

- voidious.mini.Komarious

Hmm... well most of those you list are what I'd consider mid-tier adaptive bots. I would take that to imply that your greatest weakness may be against other adaptive bots. My instinct would be that your gun and/or movement adapts much too slowly against other adaptive bots. Flattening may be useful, but fast-adapting is far far more important. RougeDC and Midboss rank fairly high yet do not use any flattening. I never found it worth the bother

I think this really doesn't have to be the end of the road. I'd highly suggest running your bot's movement/gun against MC2K7 and TC2K7 (Use the fast-learning 35 round variants for both). That should give you an idea of where your bot stands in both movement and gun, against both adaptive and non adaptive opponents. That should show you where to focus I think, and also allow you to track your own improvements with greater precision than the rumble. --Rednaxela 05:55, 1 July 2011 (UTC)

I'm guessing that you need to lower the rolling average on your surfing. Really low, like to around 1. That will speed up your movement adapting to what these bots learn against you. Beyond that, it is all about bughunting, feature adding and tweaking. --Skilgannon 11:37, 1 July 2011 (UTC)

Even without flattener, without rolling average on the movement, without an anti-surfer gun and without bin-smoothing(??), it is possible to reach #10. Choose the attributes of your gun wisely (read: tuned to the opponents movement) and use attributes in your movement that are not common in the 'standard' guns. Be carefull with special cases except when proven that they do not interfere with 'normal' behaviour. If a bot gets 90% where you only get 65%, watch that bot's behaviour in situations where you would be hit. Be aware that 'perfect behaviour' could lead to predictable behaviour. Good luck with your quest for top-25. --GrubbmGait 14:31, 1 July 2011 (UTC)

I agree this doesn't have to be the end of the road. But a break might do you some good. =) Burning yourself out on tweaks is not always the best way to find your next big rating jump. You've done a lot in a short time here!

I don't think you need to peek at any other bots to get to the top. Phoenix and Shadow are proof of that. My own feeling is that in any sufficiently advanced field, you need to understand what everyone's already done before you can really innovate. Calculus didn't spring up out of a void. Dookious climbed to #1 mostly by emulating / perfecting the ideas that were already out there, but then I kept going and Dookious/Phoenix took a huge lead over the rest of the field with some innovations along the way. Skilgannon eventually came along with DrussGT, caught Dookious, and kept on crushing it and took another huge lead - it's only recently that WaveSerpent and Diamond closed that gap a bit. Sure, we both consumed ideas from the community and studied others' code (at least I did), but I still feel we innovated pretty well on top of that. It's hard to say you're copying everyone else when you're way out in front of the pack. =)

Of course there's also value in thinking for yourself. I've been less inclined to study others' code as time goes on. Everyone feels differently about this stuff, so I won't tell you how to go about it - definitely just do what you like! Just felt like sharing my thoughts. And remember there are other territories - MiniBots, Twin Duel, Melee, saying screw it and just becoming a gun nut... Or we could throw around a new rule set again - I would try and participate. Good luck. =) --Voidious 16:00, 1 July 2011 (UTC)

- Just taking a guess, I would say my drive needs more adjustment than my guns. I say that based on having a somewhat low survival score for XanderCat's rank in the rumble. I started toying around with low rolling averages on the drive as Skilgannon suggested, but I think I need to make a few modifications before it will work right (for example, right now I use what I call a "base load" on all my factor arrays, that essentially just puts the equivalent of a bullet hit at the head-on factor. It is meant primarily for when just getting started, but it has excessive impact when using a really small rolling average. I probably need to change how that works). I'm also thinking of trying out the movement and targeting challenges. Should I add XanderCat to those tables if I do? Also, did you all just manually calculate the percentages based on the scores, running manually or by custom scripts? Or is there some already set up script for running the challenge battles? Might also try out the WaveSim thing. -- Skotty 17:22, 1 July 2011 (UTC)

- RoboResearch is fantastic for running the challenges and it outputs results in the wiki syntax. Pretty sure all recent challenges come with a challenge (.rrc) file for RoboResearch. It's also a great tool for general testing. Could be easier to download/install, though, so shout if you have problems. --Voidious 17:50, 1 July 2011 (UTC)

- I tried setting up RoboResearch, but the instructions are outdated, and I haven't spent the time to try to figure out how to fix it yet. The instructions call for running the main class TUI which no longer exists. I tried running the GUI class instead, but I don't even know if that is supposed to be functional yet. It couldn't find a settings file, nor could it load the HSQLDB JDBC driver. The instructions don't say anything about those. Error output:

java.io.FileNotFoundException: settings\properties (The system cannot find the file specified) at java.io.FileInputStream.open(Native Method) at java.io.FileInputStream.<init>(Unknown Source) at java.io.FileReader.<init>(Unknown Source) at simonton.collections.PersistentProperties.load(PersistentProperties.java:34) at simonton.collections.PersistentProperties.load(PersistentProperties.java:138) at simonton.collections.PersistentProperties.<init>(PersistentProperties.java:83) at roboResearch.Constants.<clinit>(Constants.java:24) at roboResearch.GUI.<init>(GUI.java:42) at roboResearch.GUI.main(GUI.java:24) Exception in thread "main" java.sql.SQLException: ERROR: failed to load HSQLDB JDBC driver. at roboResearch.engine.Database.<init>(Database.java:63) at roboResearch.GUI.<init>(GUI.java:44) at roboResearch.GUI.main(GUI.java:24)

- I just noticed part of the problem is how I checked out from SVN. When I did a checkout from trunk, I ended up with a trunk folder, which looking at the run instructions, is not the right way to get it checked out into a project. I'll have to monkey around with the checkout procedure a bit more to figure it out. It would be nice if there was a brief set of instructions on how to properly check out the project. -- Skotty 02:30, 5 July 2011 (UTC)

- Another update -- I figured out that all I had to do was change the checkout URL, basically adding "/trunk" to it. So the URL is: "roboresearch.svn.sourceforge.net/svnroot/roboresearch/trunk" (less the https so wiki doesn't think it's a link). But I still need more detail on how to run it. TUI doesn't exist, and the only info I found on the GUI version is that it is a work in progress. Do I run GUI? Is it finished? Do I have to run something else? Do I really want the trunk version (is it currently fully functional)? -- Skotty 02:37, 5 July 2011 (UTC)

- I think TUI was just renamed CLI, that's what I use. I'm pretty sure the GUI works fine, though, as I know several other people use / prefer it. With the CLI, I run a database instance separately from database/db.sh. I'm not sure if I created this or not, but it contains:

java -Xmx1024M -cp ../hsqldb.jar org.hsqldb.Server -database.0 file:roboresearch -dbname.0 roboresearch. Not sure if you need to do this with the GUI. And yeah, we really need to package up RoboResearch and update the docs. It's an essential tool and installing it doesn't have to be a pain. --Voidious 02:42, 5 July 2011 (UTC)- I tried running the GUI. Seemed to be working at first, but then crashed with a NPE. Not sure what the problem is yet. Error description doesn't provide enough information for a quick fix. -- Skotty 02:53, 5 July 2011 (UTC)

Thread 1: Unrecognized output from robocode, "xandercat.XanderCatMC2K7 5.1.mc2k7: Exception: java.lang.NullPointerException". Killing battle.

- I think that's saying that your bot hit an NPE. It kills the thread/ignores the battle if either bot hits an exception, but goes on its merry way running the rest of the battles, at least in the CLI. (Not everyone agrees this is the "right" behavior...) If you run that pairing in Robocode do you ever hit an NPE? --Voidious 02:58, 5 July 2011 (UTC)

- I've tried to track down the problem, but have so far been unsuccessful. I have run many thousands of battles manually, and not one exception. But the same robot in RoboResearch keeps throwing NPEs. And since I only get the one-liner explanation in RoboResearch...well, I'm not going to be able to track down an NPE in a MegaBot when I have to examine every single line of code to find it. -- Skotty 05:24, 5 July 2011 (UTC)

- RoboResearch runs Robocode in a separate process, to avoid memory issues and allow easier scaling. What I suggest you do is place a try/catch around all of your code and write any errors that happen to a file. One other thing that this might be is that you got the name slightly wrong. Make sure you copied your .jar into the robots directory and double check all the capitals etc in the name.--Skilgannon 07:48, 5 July 2011 (UTC)

- I think that's saying that your bot hit an NPE. It kills the thread/ignores the battle if either bot hits an exception, but goes on its merry way running the rest of the battles, at least in the CLI. (Not everyone agrees this is the "right" behavior...) If you run that pairing in Robocode do you ever hit an NPE? --Voidious 02:58, 5 July 2011 (UTC)

If you have real problems you can test against my mid-range Seraphim, pretty much an anti-adaptive robot (better against surfers then the other kinds). I also noticed that small bug fixes can cause considerable point gain, along with hammering out corner cases. Such as removing my victory dance increased Seraphims score as it was interfering with end of round bullet dodging, it started dancing and got hit by a last bullet or two the enemy fired before it died, gained it a cool 20 points at the time. — Chase-san 09:31, 5 July 2011 (UTC)

Fixing My Wave Surfing Rolling Depth

I think it is time for me to go back and really think about how I am processing segments to get a low rolling depth working properly on XanderCat. Let me start by giving a quick explanation of how I store my data, as it may be a bit different than what most robots do.

First off, I currently record two types of information. Hits and visits. Hits are recorded in a factor array using bin smoothing similar to what BasicGFSurfer does, and visits is just an incremented integer that says I was at a particular segment combination for a bullet wave, which I do for all bullet waves.

I store all of my wave surfing hit data in a 2-dimension array. The first dimension is the segment, the second dimension is the factors/bins. I can use any number of different segmenters. How this works is that I index all segmenters into the single segment array. So lets say I have segmenter A with 4 segments, and segmenter B with 3 segments, and 87 bins. My hit data array would then be a double[12][87] (3*4=12 segment combinations, 87 bins).

I store all of my wave surfing visit data in a 1-dimension array of int. Following in the former example, it would be an int[12] array. At present, this is used to balance the arrays when picking the best one (by dividing by total visits) and to decide whether or not I will consider using a particular segment combination. (e.g., I can say not to use a particular segment combo until it has seen at least X number of visits).

When I want to consider a particular segment combination to use for surfing any particular wave, I pull back all the indexes that match that segment combination and add the bin arrays for those indexes into a single combined array.

When I added rolling depth support, I was only thinking of rolling off hits. I created a List<List<Parms>> for this, where Parms was just a little class that holded the necessary information to roll back a previously added hit. The outer list index matched the segment index, while the inner list stored data for X number of hits. Once the list reached the preset rolling depth value, for every new hit it would remove the oldest hit from the list and roll it back. So, for example, lets say I get hit, and the combined segment index is 5, factor 23. This would get added to the hit data array (lets call this hitArray) centered at hitArray[5][23]. The hit would also get recorded in the rolling depth list (lets call this rollData) at rollData.get(5).add(new HitParms(...)). If the roll depth had been exceeded, it will then remove the oldest hit data in the list (rollData.get(5).remove(0)) and roll the old hit off the hitArray (same as adding a new hit, only it uses the saved data and applies a negative hit weight to remove the old hit).

To complicate things a tad further, I also add what I call a base load to whatever array is to be used for the current surf wave (this base load doesn't actually get added to the hitArray, it is added to a temporary array used for surfing the current wave). This base load is just the equivalent of a single head-on hit. It gets lost in the background when there is a lot of hit data, but is crucial in the beginning to avoid getting hit by head-on targeting.

And finally, I also store a combined no-segment array seperately, which I rely on early in the match when the segment combinations do not have a visit count over a certain threshold. I could obtain this by adding all segment arrays, but this seemed excessive, so I just store it in a separate array.

Given all this, I'm left wondering a few things. One, what do the rest of you really mean when you talk about having a rolling depth of 1 or 2. Are we talking rolling for every visit, every hit, or something else? Two, how should I handle avoiding head-on early in the battle when there is no data to rely upon without it messing up trying to use a low rolling depth (and will my current base load approach suffice for this)? Three, as I currently have it implemented, I can only roll hit data for all segments combined. I might could manually roll on the fly in the temporary array used for surfing, but I need to figure out what the rest of you are really talking about when you refer to rolling depth before I try such a thing.

—Preceding unsigned comment added by Skotty (talk • contribs)

Well, the usual "rolling average" method used in most targeting is far far simpler than what you describe. Usually exponential moving average is used. Instead of decrementing old hits, you just decay the weighting of old data. In a system like you describe with "hits" and "visits" kept separate, the exponential rolling average strategy would be, when you get a hit in a segment:

- First, multiply all values in "hits" and "visits" by a constant between 0.0 and 1.0.

- Then add your new hit and new visit, but multiply each by 1 minus the constant.

For an example, if you choose a constant of 0.5, then it means that with there is a hit in the segment, the old data will be worth half as much as before. Also, some bots do it slightly differently so the decay is constant, rather than only occurring when a segment is hit, though that takes a little more work to do efficiently.

The method you describe should work too, if you decrement both visits and hits when decrementing old hits I believe. I'm pretty sure decrementing both would be necessary to keep the values sane. Personally, I don't consider it worth the complexity, but diverse techniques is always interesting. :)

About the "base load", I'm pretty sure most bots do either of the following two things:

- Initialize the data to contain the "base load" (which means that in an exponential moving average system, it'll decay away to near-nothing pretty fast)

- or, make the "base load" a special case that only applies when there are no hits in the segment.

Hope that helps. --Rednaxela 23:56, 6 July 2011 (UTC)

Ok, a few things to tackle here. =)

- What most VCS / GF bots do is for each bin, the danger value is a number between 0 and 1. When data is logged for a segment, the value for each bin in that segment becomes

((rolling depth * old value) + new value) / (rolling depth + 1). The "new value" would be 1 for the hitting bin, some bin smoothed value < 1 for the rest. You might usemin(rolling depth, times his segment has been used)instead of rolling depth, a trick I learned from PEZ - ie, use the straight average if you don't have rolling depth's worth of data. A rolling average of 1 means all previous data is weighted exactly equal to the new data. There's no magical reason you need to use this style of rolling average, but it's pretty simple and elegant. Bots that don't use segments have to come up with different styles of data decay. - What you're referring to as a "visit" isn't what most of us are referring to. Generally, a visit is to a bin, not (just) a segment. A visit means "I was at a GuessFactor when the wave crossed me", as opposed to "I was at a GuessFactor when a bullet hit me". A visit is what a gun or a flattener uses to learn. What you're referring to as a "visit" is what you'd use in the min(rolling depth, x) example above, I think.

- About hard coding some HOT avoidance... Many bots use multiple buffers at once and sum the dangers from all buffers. In that case, you can just have one unsegmented array and load it with one shot at GF=0 instead of looping through every segment of all buffers. There are other benefits to summing multiple buffers of varying complexities, like having a balance between fast and deep learning (without having to figure out when to switch). In Komarious, I just add a tiny amount of danger smoothed from GF=0 after I poll my stats - mainly a Code Size-inspired trick, but not a bad approach. Diamond just uses a smoothed GF=0 danger when he has no data - this is only until the first time he's hit since he uses Dynamic Clustering.

Hope that helps! --Voidious 01:41, 7 July 2011 (UTC)

- And just a quick note to be clear, the "((rolling depth * old value) + new value) / (rolling depth + 1)" formula that Voidious cites is exactly mathematically equivalent to the method I was going on about above. It's just that the constant I used in my explanation is equal to "(rolling depth / (rolling depth + 1))". Two different ways of describing the exact same thing. Personally, I find what people sometimes term the "rolling depth" number less intuitive than the "what to multiply the old data by" constant, but it's really a matter of personal taste. I just through I'd point the equivalence out. :P --Rednaxela 02:07, 7 July 2011 (UTC)

What Voidious describes is exactly what I used to do - keep a whole bunch of buffers, where the bins represent the hit probability at that guessfactor, and when logging a new hit use a 1/((i-index)*(i-index) + 1) binsmoothing technique coupled with that rolling average formula he gave. There were 2 main problems: execution time (slow), and memory usage (high). I did what I could to get around this by hoisting the inverse of all the divisions outside of the loops and switching to floats, but that only helped so much. So, a while ago I changed my data-logging in DrussGT: instead of a whole bunch of arrays of smoothed hits - with around 100 bins - I instead now keep the guess factor of the last 2*rollingDepth + 1 hits. Each hit I weight less and less exponentially, by logging each hit into the bin it corresponds to (this is at wavesurfing time), and incrementing that bin with a value that gets progressively smaller. The factor I use for making the increment get smaller is roll = 1 - 1 / (sb.rollingDepth + 1), and each time I go through the loop I make the increment smaller by doing increment *= roll. By carefully choosing a starting value for increment I was able to make this system perform identically to the one that used the rolling average formula above while using a fraction of the CPU to log hits and a fraction of the memory to store them. Once all the hits are logged into their bin, I take this array of unsmoothed hits and smooth them. This has the huge advantage of taking any duplication of hits and essentially merging them again, speeding up the process further. Bins that don't have hits in them don't need to be smoothed, and in practice there are quite a lot of bins that are empty. Data logging has been sped up because instead of smoothing data into hundreds of buffers, each with a hundred bins, instead I just shift the hits over by one in these hundreds of buffers and add the new hit to the beginning. There are a few other tricks that I used to speed up the whole process, like only allocating the array for the hits once that segment has been hit and pre-calculating the indexes for all the buffers I need to access. But choosing this system has essentially eliminated all the skipped turns DrussGT used to experience, while still keeping all of my hundreds of buffers and all of my original tuning intact. --Skilgannon 09:23, 7 July 2011 (UTC)

- That's an interesting approach. It seems to me though, that once you're storing a list of hits, why not forgo bins entirely? It seems like it would be simpler and have essentially the same result. Maybe I'm wrong, but I'd think performance could also be improved further, with a method that instead of adding many sets of bins, concatenates a list of the

2*rollingDepth + 1hits from each segment, along with the weighting for each list entry. Then instead of calculating the value for a bunch of guessfactor bins, take advantage of knowing the integral of the smoothing function to do a fast and precise calculation of where the peak would be. Just a little thought. --Rednaxela 12:37, 7 July 2011 (UTC) - I've toyed with the idea of using some sort of DC system as a replacement, but the lack of rolling data makes me very hesitant. Also, it's not enough just having one peak (maybe you were thinking of targeting?): I need the danger at every point on the wave. I could use the raw data at each point I need to check, which would be slow, or I can take a whole bunch of evenly spaced samples, which is basically bins, which is what I am doing. My explanation was a bit complex I think. Perhaps a simpler explanation would be: when logging a hit, instead of smoothing the hit into a buffer, put it into a que (of which many exist, at their own 'location' just like a segmented VCS system) and delete the oldest entry in the que. When the time comes to stick the hits into a wave, go through the que and increment the bin in your 'wavebuffer' that corresponds with the GF of each hit in each que. Make sure that the older items in the ques are weighted exponentially lower. When you've put all the hits into that buffer, use a smoothing algorithm to, essentially, 'fill in the blank areas'. That's basically it, the rest is just implementation details.--Skilgannon 13:42, 7 July 2011 (UTC)

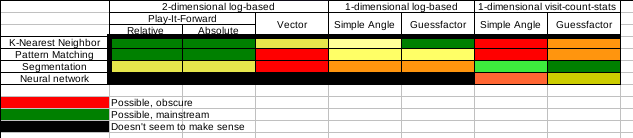

- By forgoing bins I never meant using DC. I mean segmented queues of hits like you have, but use non-bin methods to sum the data and find the peak. When not using bins for storage, I kind of feel it's a silly/wasteful to use bins for analysis of the stored data. As a note, I think DrussGT's movement may be the first actual implementation that fits the segmented log-based guessfactor category. See this chart I made a while back:

- --Rednaxela 14:01, 7 July 2011 (UTC)

- I think WaveSerpent might fit that too. (Maybe just WaveSerpent 1.x.) And, even further off-topic, I think ScruchiPu and/or TheBrainPi might belong in one of those black NN slots - for some reason I thought I recalled them being fed the tick by tick movements, not the firing angle / GF. --Voidious 15:59, 7 July 2011 (UTC)

- I think ScruchiPu and/or TheBrainPi are off this chart entirely. IIRC they are fed by tick by tick movements yeah, but that's neither log-based or visit-count-stat based, so it wouldn't fit in the black slots. It would go in it's own column. As subtype of "play-it-forward" but not a subtype of "2-dimensional log-based". --Rednaxela 16:32, 7 July 2011 (UTC)

- I think WaveSerpent might fit that too. (Maybe just WaveSerpent 1.x.) And, even further off-topic, I think ScruchiPu and/or TheBrainPi might belong in one of those black NN slots - for some reason I thought I recalled them being fed the tick by tick movements, not the firing angle / GF. --Voidious 15:59, 7 July 2011 (UTC)

- Off-topic, but... Decaying surf data in a DC system is kinda interesting. Designing a system for it in Diamond really made me appreciate VCS / rolling average. =) Instead of weighting things by age, I sort my "cluster" inverse-chronologically and weight each hit according to its sort position. I actually tried hard to figure out how to emulate a rolling average of 0.7 - the most recent data is weighted about 60/40 to the rest of the data, 2nd most recent is 60/40 to the rest of the rest, etc. That got me thinking about the golden mean, like in this image. I weight the most recent scan 1, and the rest by (1 / (base ^ sort position)), with a base of golden mean = ~1.618. So it's 1, .38, .24, .15. I figured the golden mean was cool and magical and this modeled rolling average = 0.7 pretty well, so I stuck with it. =P The first one basically gets a sort position of 0 instead of 1.

- Come to think of it, I really could model it to just weight it exactly how a rolling average 0.7 would in a segment. Maybe I'll try that. --Voidious 14:34, 7 July 2011 (UTC)

- /me waits for Rednaxela to come up with the real formula he should use to model the weights like the relative areas of the golden mean rectangles. =) --Voidious 14:43, 7 July 2011 (UTC)

- Yes, duh, I should square the golden mean since it's the ratio of the length of the sides, while the area is that length squared. And not special case sort position 1. I'm kind of excited to have something stupid like this to tinker with... =) --Voidious 14:49, 7 July 2011 (UTC)

Debugging Request for Rednaxela or GrubbmGait

If either of you can spare the time (and no hard feelings if you don't), can you check your rumble folder for an exception report from my robot XanderCat? I'm particularly interested in the battles between stelo.MatchupWS 1.2c and xandercat.XanderCat 6.0.2. From the scores, I would guess my robot crashed on those battles, but I haven't been able to reproduce it at home. When my robot crashes, it writes the Exception out to file (thanks to whoever originally suggested doing this; it has been helpful). The file name would be "xandercat.XanderCat_Exception.txt". It is the only information my robot ever writes to file. If you happen to find this file, perhaps you could post it's contents on this page? Whether it's there or not, if you happen to check for it, thank you. -- Skotty 18:58, 11 July 2011 (UTC)

- What kind of score do you get running the same matchup in Robocode? --Darkcanuck 19:08, 11 July 2011 (UTC)

- At home XanderCat 6.0.2 pretty consistently gets a score of of around 45 to 50 against stelo.MatchupWS 1.2c. It may be just a fluke that something bad happened twice against stelo.MatchupWS 1.2c, or it may be that stelo.MatchupWS somehow encourages a problem. Whatever the case, I haven't seen it at home in my own testing. In the rumble with 2 battles, XanderCat has a score of 11.54 with a PBI of -40.4. The difference in score is significant enough for me to be pretty confident that an exception was thrown. XanderCat doesn't try to recover from exceptions; when an exception occurs, it is printed to System.out, saved in a file, and the run() method exits. -- Skotty 19:20, 11 July 2011 (UTC)

This is the contents:

java.lang.NullPointerException: null xandercat.gun.CircularGun.getFiringVector(CircularGun.java:89) xandercat.gun.compound.CompoundGun.myBulletFired(CompoundGun.java:126) xandercat.gun.GunController.setFireBullet(GunController.java:140) xandercat.gun.AbstractGun.fireAt(AbstractGun.java:40) xandercat.gun.compound.CompoundGun.fireAt(CompoundGun.java:117) xandercat.gun.compound.CompoundGun.fireAt(CompoundGun.java:117) xandercat.gun.compound.CompoundGun.fireAt(CompoundGun.java:117) xandercat.group.bulletshield.BSProtectedGun.fireAt(BSProtectedGun.java:105) xandercat.AbstractXanderBot.run(AbstractXanderBot.java:352) net.sf.robocode.host.proxies.HostingRobotProxy.run(HostingRobotProxy.java:220) java.lang.Thread.run(Unknown Source)

--GrubbmGait 19:37, 11 July 2011 (UTC)

- Thank you! Those intermittent bugs can be a real bear to track down. Got it fixed now. :-) -- Skotty 19:43, 11 July 2011 (UTC)

- Just fyi, same exception is logged here. Don't have to worry that it's a separate issue on the runs from my client :) --Rednaxela 23:40, 11 July 2011 (UTC)

- One problem down, but I still see there are likely some exceptions happening in version 6.1. I'm not having much luck getting them to happen on my own machine. Makes me wonder if maybe the system has something to do with it -- perhaps a missed turn is causing it somehow, and it's not missing turns on mine so I don't see it? Just a wild guess. I'm going to use RoboResearch and create a huge test bed to stress test against. Gotta find those exceptions... -- Skotty 03:51, 12 July 2011 (UTC)

- Just fyi, same exception is logged here. Don't have to worry that it's a separate issue on the runs from my client :) --Rednaxela 23:40, 11 July 2011 (UTC)

Sorry to inform you, but with 6.1.2 I got the exception below. --GrubbmGait 21:07, 12 July 2011 (UTC)

java.lang.NullPointerException: null xandercat.drive.SimpleTargetingDrive.onNextBulletWave(SimpleTargetingDrive.java:137) xandercat.track.BulletHistory.onTurn(BulletHistory.java:123) xandercat.AbstractXanderBot.run(AbstractXanderBot.java:377) net.sf.robocode.host.proxies.HostingRobotProxy.run(HostingRobotProxy.java:220) java.lang.Thread.run(Unknown Source)

- Thank you! I LOVE getting exception reports...because that means I can fix them. I don't know if fixing this will fix all the wierdness I've had with version 6.x, but it can't hurt. Maybe my rank and score will actually go up instead of down for a change. lol. -- Skotty 21:13, 12 July 2011 (UTC)

Might have to get back into things

I don't want to have yet another person pass my highest robots ranking, and XanderCat is getting pretty close. I might have to get things in gear and start robocoding again. But considering it has been in remission for a year, not sure if I want my addiction to relapse. ;) — Chase-san 00:46, 12 July 2011 (UTC)

- Don't worry. I just released a new version that lowered it's rank. :-P However, I am using a brand new drive and factor array system that are somewhat in their infancy (I really haven't given them enough shakedown yet, and a few bits are incomplete), so I do expect to climb back up into the top 50 eventually. I was at #49 at version 5.1.1. Still a long ways from the top, but just give me a little more time. :-) -- Skotty 00:57, 12 July 2011 (UTC)

- Yeah, you we're floating right below Seraphim, and thats why I say this. I have my pride, but her weak points are all the weak bots, her strong points are all the strong(er) robots. In perspective, she can defeats 3 (over 50% score only 2 if you use survival) robots in the top 10, but defeating robots in the top 10 does not get you high into the rankings. — Chase-san 01:22, 12 July 2011 (UTC)

Do you have any method of personal contact (E-mail, Messenger (AIM,Skype,Google Talk,Yahoo), IRC, Twitter), I wouldn't mind discussing things about robocode. — Chase-san 16:22, 12 July 2011 (UTC)

- I have an old AIM account, but I haven't been using it lately. If you are an active chat user, I could start firing it up on boot up again. Otherwise, I have an email account, but we need a secure way for me to send it to you that won't get picked up by spam bots. -- Skotty 03:01, 14 July 2011 (UTC)

Version 6.x Scores All Over The Map

Well...I'm officially confused. I've been seeing huge point swings against various opponents in the rumble even with minor changes, and it seems they are inconsistent with what I see at home. Though admittedly, I still need to put together a big stress test to get a larger performance sample. I'm still wondering if it may have something to do with missed turns, as I don't really know exactly what happens when a turn is missed (I can't find any docs that explain it thoroughly). Or maybe there are still exceptions happening. Or both, perhaps missed turns somehow causing exceptions. Hopefully I can figure it out because it is really driving me insane. Version 6.1.1 in the rumble actually lost a round to Barracuda, and that just doesn't happen. I'm going to try running v6.1.1 in the fast learning MC2K7 challenge tonight using RoboResearch, since that is already pretty much ready to go; not using any the Raiko stuff because I'm just doing it to see if any exceptions or other anomalies happen. Running 500 seasons, and will check on it in the morning. -- Skotty 06:07, 12 July 2011 (UTC)

- I think it is not help because roboresearch works with robocode version 1.6.4 and rr clients use robocode version 1.7.3 now and i notice, that there're some difference between them. If you want i can share little app, which may be called analogue of roboresearch with many restrictions, but it is designed to work with rc 1.7.3 --Jdev 08:14, 12 July 2011 (UTC)

- I have roboresearch working with 1.7.2.2 and have no problems with it. Don't see a reason why it shouldn't work with 1.7.3.0. --GrubbmGait 09:25, 12 July 2011 (UTC)

- As i remeber, RoboResearch requires modifications of robocode messages parser to work with last versions. But may be it was my unique troubles:) --Jdev 09:37, 12 July 2011 (UTC)

- You may be right, I did not get it from the source, but picked up a package of someone else (Voidious I think). --GrubbmGait 09:46, 12 July 2011 (UTC)

- As a note, this type of issue makes me wish that the roborumble client uploaded replay files when it uploaded results (Haha... that would take a lot of space). Actually... it would be nice even if it just uploaded skipped turn count along with the scores. --Rednaxela 12:22, 12 July 2011 (UTC)

- This morning it was up to 137 seasons of the MC2K7 fast learning challenge with no exceptions or anomalies. The skipped turns thing is still just a theory. Maybe I should intentionally make it run slower at home to try and cause some skipped turns to see what happens. On that same tangent, it is probably about time I worked on my robot's efficiency so that it isn't a potential issue. -- Skotty 12:45, 12 July 2011 (UTC)

- On the plus side, this whole thing has prompted me to finally build some nice CPU time profiling tools. Currently taking a closer look at how long various parts of the code take to execute. -- Skotty 13:44, 12 July 2011 (UTC)

- I'm still trying to figure out what the heck is going on. In version 6.1.2, the only change was to remove a debug print line that was in a bad place, causing part of the drive code to waste a couple of milliseconds when deciding where to go for a new wave. But check out the first battle result against nat.BlackHole 2.0gamma -- my survival went from 42.86 to 8.57 (difference from version 6.1.1 to version 6.1.2). I don't know if I am on the right track on trying to improve efficiency, but something is definitely still very wrong somewhere. I suppose I should try upgrading to the latest Robocode version and see what if any change that results in (I'm currently still using 1.7.2.2) -- Skotty 21:04, 12 July 2011 (UTC)

- Definitely use the rumble version to do ALL of your testing! It can make a big difference... You could be running into bugs in the old version or possibly in the new one.

- @Rednaxela, as usual you come up with interesting rumble ideas. I don't think storing a slug of data per bot would be unreasonable. Not every replay of course, but maybe a size-limited block of custom stats, exception reports, etc. The tricky part would be getting the rumble client to export it for the server, would probably require an API function. --Darkcanuck 21:40, 12 July 2011 (UTC)

New Theory on Performance Issues

I've been wondering if changing my robot to log exceptions to file is the reason for the performance anomalies. But I couldn't figure out how that would make sense until just now. Could it be that Robocode handles the following two situations differently (or perhaps, differently depending on Robocode version)?

- Robot run() method ends due to Exception

- Robot run() method ends normally

My new theory questions whether in some instances, a robot crashes but is reactivated to finish the remaining rounds, but in other instances it is out of commission for all remaining rounds. Before I added the code to log exceptions to disk, exceptions were not caught. Now they are caught, but they are caught outside of the main while() loop, causing the run method to exit without an exception. Without knowing how Robocode internally handles the robot threads, it is hard to say what effect this might have. -- Skotty 21:51, 12 July 2011 (UTC)

- It's been awhile, but I think that if the run() method exits, then your robot is done for the round! Doesn't robocode only call that once at the beginning of each round? You definitely want to catch and handle exceptions inside the loop so that your robot can keep playing, if possible. My bots use a while(true) loop inside run() and will never exit, except for an unhandled exception. --Darkcanuck 22:20, 12 July 2011 (UTC)

- The alternative is to do a try/catch inside the while() loop. And while this would help, it would also help mask Exceptions that happen. So on one hand, I want to handle them, but on the other, it's almost better for it to crash, burn, and throw a tantrum so I will actually see the problem and correct it, rather than having it erode my robots scores quietly. -- Skotty 22:33, 12 July 2011 (UTC)

Debugging XanderCat -- What Next?

I had at least one instance where XanderCat bugged out that ran on my own machine, but no exception report was produced (see XanderCat 6.1.4 vs MagicD3 0.41). This means it wasn't a runaway while loop. My next step is to start writing out short data files for every robot, every round. Then at the end of the battle, if everything went normally, the files will be deleted. In those files, I will write out the number of bullets I fired, and the number of bullets the opponent fired, my round hit ratio, and the absolute distance I traveled during the round. When I see a battle that went bonkers, here are the scenarios I will be looking for:

- Files present for some but not all rounds. This will indicate that the robot stopped operating completely.

- Files for all rounds present, but bullets I fired dropped dramatically or went to 0 at same point during the battle. This will indicate that my gun stopped firing.

- Files for all rounds present, but my hit ratio dropped dramatically or went to 0 at some point during the battle. This will indicate that my gun was firing but the aim went bonkers.

- Files for all rounds present, but the number of bullets opponent fired dropped dramatically or went to 0 at some point during the battle. This will indicate I stopped detecting the opponent's fired bullets.

- Files for all rounds present, but absolute distance travelled dropped dramatically or went to 0 at some point during the battle. This will indicate that my drive stopped working, and my robot just starting sitting still.

Anyone have any other suggestions as to what I might look for, or other ideas on how I might try to track this down? -- Skotty 13:32, 13 July 2011 (UTC)

What I've done is look at every single loop in my 4000+ lines of code, checking that each one has an exit clause, and if there isn't one hardcoding one in (using a countdown). I also put a try/catch around all my code so all my other code logs exceptions to disk. Otherwise, unless you have a security manager problem everything *should* be caught. In theory =) --Skilgannon 14:19, 13 July 2011 (UTC)

How about try the following: Set up a script that runs robocode repeatedly, with parameters that cause it to run XanderCat 6.1.4 vs MagicD3 0.41 AND save replay files, and have your script delete the replay file whenever the resulting score is above 50%? I've done command line scripting of battles before it's it's fairly trivial. I suggest this method because it should catch the problem in action regardless of the cause. --Rednaxela 14:34, 13 July 2011 (UTC)

- Switching to the most recent client for my testing was a good idea. Things are definitely different in version 1.7.3.0 than they were in 1.7.2.2. I think, for one, I have potentially fallen victim to a change in how Bullets are handled. My overall hit ratio in 1.7.3.0 keeps coming back 0, whereas it worked fine in 1.7.2.2. I have to look into it more, but I think it has to do with how I am handling the Bullets. I vaguely recall once seeing some Robocode issue related to Bullets, but I don't recall where at the moment. I'll have to dig into it more... -- Skotty 21:29, 13 July 2011 (UTC)